AI Platform Engineering for AEC: How We Run an AI-Native Company

Technology

5

min read

AECFoundry applies the same platform engineering methodology internally that we bring to enterprise AEC clients — build foundational capabilities once, deploy compounding solutions across every workflow.

In our previous post, we explored the shift happening across AEC: AI handling volume, humans handling judgment. That framing explains what is changing. This post is about how — specifically, how we've structured AECFoundry around that principle, and why the architecture behind it is the same one we deploy for enterprise clients.

The short version: we run our company as a platform. Not a collection of AI tools bolted onto business processes, but a deliberate infrastructure of foundational capabilities on which every workflow, automation, and solution is built. The methodology has a name in the software world — platform engineering — and it translates directly to how we deliver AI-native solutions across architecture, engineering, and construction.

What Platform Engineering Actually Means

Platform engineering is the discipline of building self-service internal capabilities that abstract complexity and let teams ship faster. In software organizations, this typically means creating "golden paths" — opinionated, well-supported ways to build and deploy that eliminate the need to reinvent infrastructure for every new project. Gartner projects that 80% of large software engineering organizations will establish dedicated platform engineering teams by 2026.

The core idea is straightforward: invest once in foundational infrastructure, and every team that builds on it gets a compounding speed advantage. Instead of each developer configuring their own deployment pipeline, the platform team builds one pipeline that everyone uses — maintained, tested, secure by default. The developers focus on solving problems, not wrestling with setup.

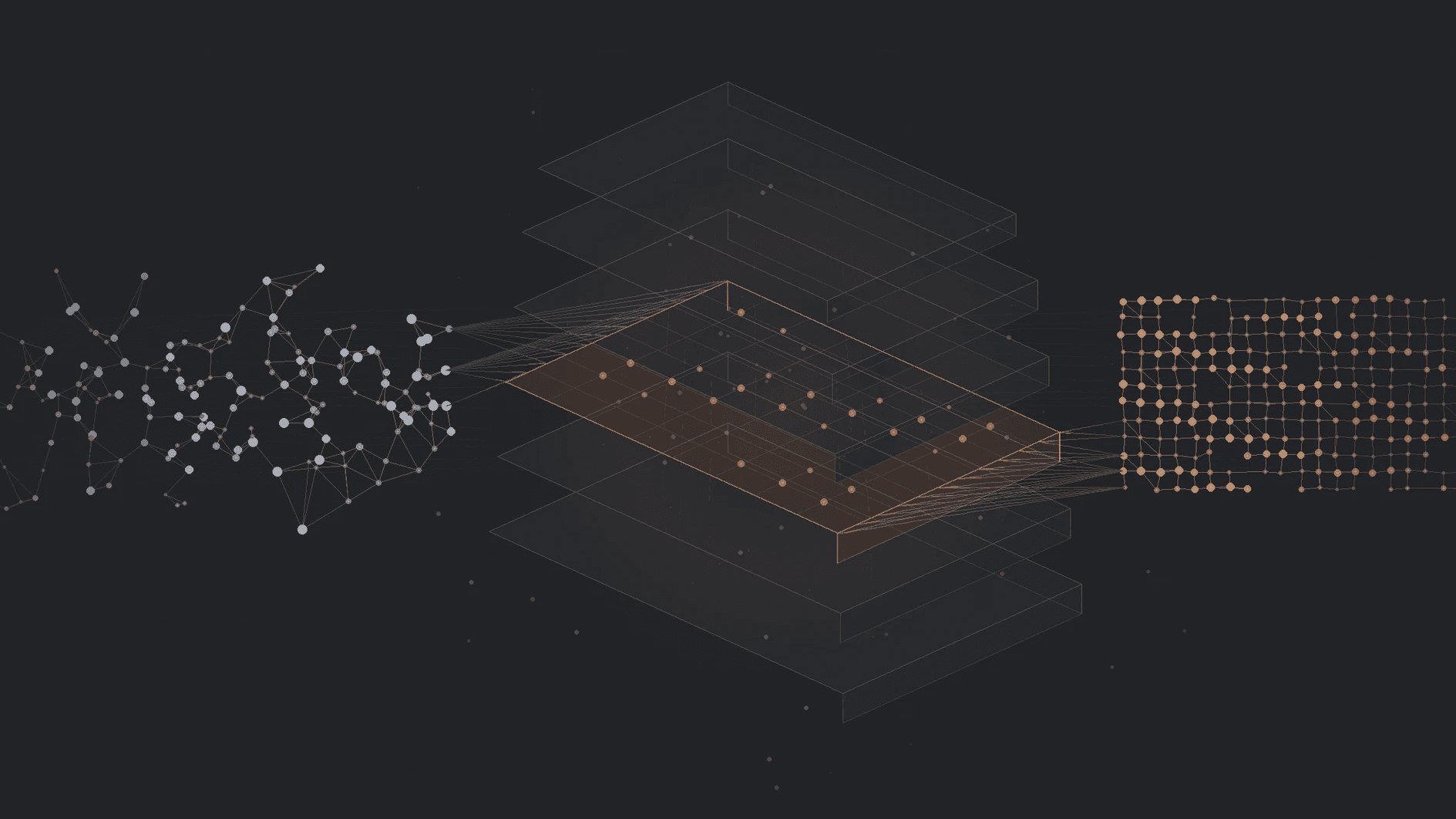

That is the traditional framing — platform engineering for software teams. But AI changes the equation. When AI can ingest data, classify it, route it, generate outputs, and learn from corrections, the concept of a platform expands beyond developer tooling into something broader: an operational platform for the entire organization. The golden paths are no longer just deployment pipelines and CI/CD templates. They are automated workflows where AI handles volume — processing documents, enriching records, drafting outputs, running evaluations — and humans step in at the decision points that require judgment, context, and domain expertise.

This is what we call AI platform engineering. The platform is no longer just infrastructure that humans operate on. It is a hybrid workforce — AI and human capabilities woven into a single system, each doing what they do best. Building this kind of platform becomes more natural and more intuitive than traditional platform engineering, because AI can absorb the kind of unstructured, knowledge-heavy work that could not be automated before. The result is not a developer platform. It is an organizational and operational platform — one that scales how an entire company thinks, operates, and delivers.

That is the principle we built AECFoundry on. And it is the same principle we bring to every enterprise engagement.

AECFoundry as a Platform

We built our company the way a platform engineering team builds an internal developer platform. There is a foundation layer — our automation server, our operational data backbone, our AI context architecture — and there is a solution layer built on top of it.

The foundation handles the work that every process needs: ingesting data, classifying it, routing it, enriching it, and surfacing it for human review. When a meeting ends, the platform transcribes it, classifies the meeting type, extracts action items, and routes the output to the appropriate system — all before anyone asks. When a prospect books a call, the platform has already assembled a research brief. When an engineer picks up a task in the morning, the platform has already compiled the full context: what the client requested, how the product lead wants it built, and the relevant technical architecture.

The solutions — CRM automation, content production pipelines, meeting processing workflows, daily task briefings — are not standalone tools. They are configurations deployed on the same foundation. Each new capability we add compounds on the existing infrastructure rather than requiring its own bespoke stack. This is what makes a lean squad operate with the throughput and coverage of a team several times its size.

The buy-versus-build principle governs every decision. If a tool solves a commodity problem well — transcription, team messaging, video conferencing — we buy it. If the problem is specific to how we operate and compete — our automation server, our AI context architecture, the structured knowledge layer that makes AI effective in our workflows — we build it. This is also how we advise our clients.

The AI-Enabled Forward-Deployed Engineer

Platform engineering only works if the people operating on the platform are equipped to use it. In traditional software, the platform team builds the paths and the product engineers build on them. In an AI-native company, a different kind of role emerges.

Palantir pioneered the concept of the Forward-Deployed Engineer (FDE) — an engineer who embeds directly with clients, building solutions on the company's platform to solve their specific problems. The platform product team then generalizes learnings from the field back into the platform, creating a flywheel between client delivery and platform improvement. Job postings for FDE roles surged over 800% between January and September 2025, and companies from OpenAI to Anthropic have adopted the model.

We operate a version of this that we call the AI-enabled FDE. It is the merging of four functions that used to be separate: domain expertise, product thinking, software engineering, and AI fluency. This is not a generalist who knows a little of everything. It is a practitioner who understands AEC workflows deeply enough to identify what should be automated, who thinks in products rather than projects, who can build and ship the solution, and who knows how to structure AI systems so they produce reliable, quality-gated outputs.

The AI-enabled FDE operates on the platform. They do not spend time configuring infrastructure, building deployment pipelines, or setting up monitoring — the platform handles that. Their entire focus is on the problem in front of them: understanding the client's workflow, identifying where AI handles volume and where humans handle judgment, and building the solution that fits precisely into that boundary.

Behind the FDEs, a separate team of AI and ML engineers focuses exclusively on the platform itself. They are not building client solutions. They are taking what the FDEs learn in the field — the patterns, the edge cases, the capabilities that keep recurring — and generalizing them into reusable platform components. A document extraction pipeline that an FDE builds for one client becomes a configurable capability available to every future engagement. This separation is deliberate: FDEs stay close to the problem, platform engineers keep the foundation compounding. The flywheel only works if both sides are running.

This role structure and cross-domain expertise - rare to find assembled in a single team - is central to both how we run internally and how we deliver to clients.

The Enterprise Delivery Model: Platform-Driven, Forward-Deployed

When we engage an enterprise AEC client, we follow the same platform engineering playbook we use on ourselves. The methodology has five phases, and the sequence matters.

AI-readiness assessment. Before building anything, we interview stakeholders, examine existing data, and assess the organization's readiness for AI-native operations. This is not a technology audit. It is a process discovery exercise: how does work actually flow through the organization? Where is institutional knowledge locked inside documents, individuals, or manual handoffs? Where are the decision points that require human judgment, and where is the volume work that AI should handle? The discovery process itself is AI-assisted — SME interviews are recorded and transcribed automatically, and AI synthesizes the findings in real time. By the end of a discovery session, structured process maps and capability assessments have already begun to emerge, rather than waiting weeks for a consultant to write them up.

Schema and process mapping. We map the client's data architecture and operational workflows in detail. This is where most AI initiatives fail — they skip the mapping and jump straight to tools. The result is AI that operates on assumptions about how the industry works, rather than reflecting how the specific organization actually operates. Our domain expertise means we speak the language and understand the structures before we write any code. AI analyzes the client's existing data — schemas, document formats, system integrations — and surfaces patterns, gaps, and transformation requirements that would take a manual team weeks to identify. The output is a precise map of where the organization is today and what needs to change.

Benchmarking and evaluation. Before configuring a single pipeline, we establish baselines. How accurately can current systems extract data from the client's documents? What is the error rate in existing manual processes? Which AI models perform best on the client's specific data types? These evaluations are built into the platform — not bolted on afterward — so that every decision about tooling, model selection, and architecture is data-driven from the start. This is also where continuous improvement begins: the benchmarks we set during this phase become the targets against which every future iteration is measured.

Platform configuration. We configure our foundational capabilities — document intelligence, workflow automation, decision support, context architecture — for the client's specific environment. AI assists here too: pipeline configurations are suggested and brainstormed based on patterns from prior engagements, then refined by the FDE for the client's context. This is where platform engineering pays off: we are not building from scratch. We are deploying proven capabilities onto a new context, which is why we can deliver three-month MVPs for systems that would take conventional teams a year or more.

Solution deployment. Our AI-enabled FDEs build and ship solutions directly within the client's environment. Each solution inherits the platform's quality gates: citation verification, consistency checks, human-in-the-loop approvals at every critical decision point. The client gets a working system, not a proof of concept.

Platform feedback loop. What we learn from each engagement flows back into the platform. A common pattern or pipeline that solves a workflow across a several engineering clients becomes a validated and reusable capability. The platform gets smarter and more capable with every deployment. This is productization in action — not building one-off custom software, but developing repeatable capabilities that compound within and across clients.

Every phase of this process is either fully automated or AI-assisted. We built it this way deliberately — not to remove humans from the equation, but to focus human attention on the part that matters most: translating tacit knowledge into executable logic, and transforming domain expertise into intelligent systems. That is the work that no automation can replace - yet. It is the work our people spend their time on, and where we deliver the most value.

What Transfers Is the Approach

We are not our clients. Our internal platform is designed for a small, fast-moving AI company. Enterprise AEC firms operate at different scales, with different constraints, regulatory requirements, and organizational complexity. The specific solutions we build for ourselves — our content production pipeline, our meeting automation, our daily task briefing system — are calibrated for our context and technology stacks.

What transfers is the methodology. Treat your organization as a platform, not a collection of disconnected tools. Invest in foundational capabilities that compound. Understand the boundary between AI volume and human judgment — design every system around it and re-engineer your processes based on what is possible, not what you already know. Deploy solutions on proven infrastructure rather than building bespoke stacks. Maintain quality gates and human oversight at every decision point that matters.

This is not a theoretical framework. At a small scale, it is the architecture that runs our company every day. At a large scale, it is the same architecture we configure and deploy for enterprises across the built environment with AECOS - our AI operating system for AEC.

The firms that will lead the next decade of AEC are not the ones buying the most AI tools. They are the ones building the platforms — the foundational capability layers that turn institutional knowledge into scalable, compounding operational advantage.

If your organization is ready to move from AI experiments to AI operations, that is a conversation we should have.