Human Work vs. AI Work: What’s Really Shifting in the Built Environment

Technology

8

min read

The Job Hasn’t Changed. The Work Inside It Has

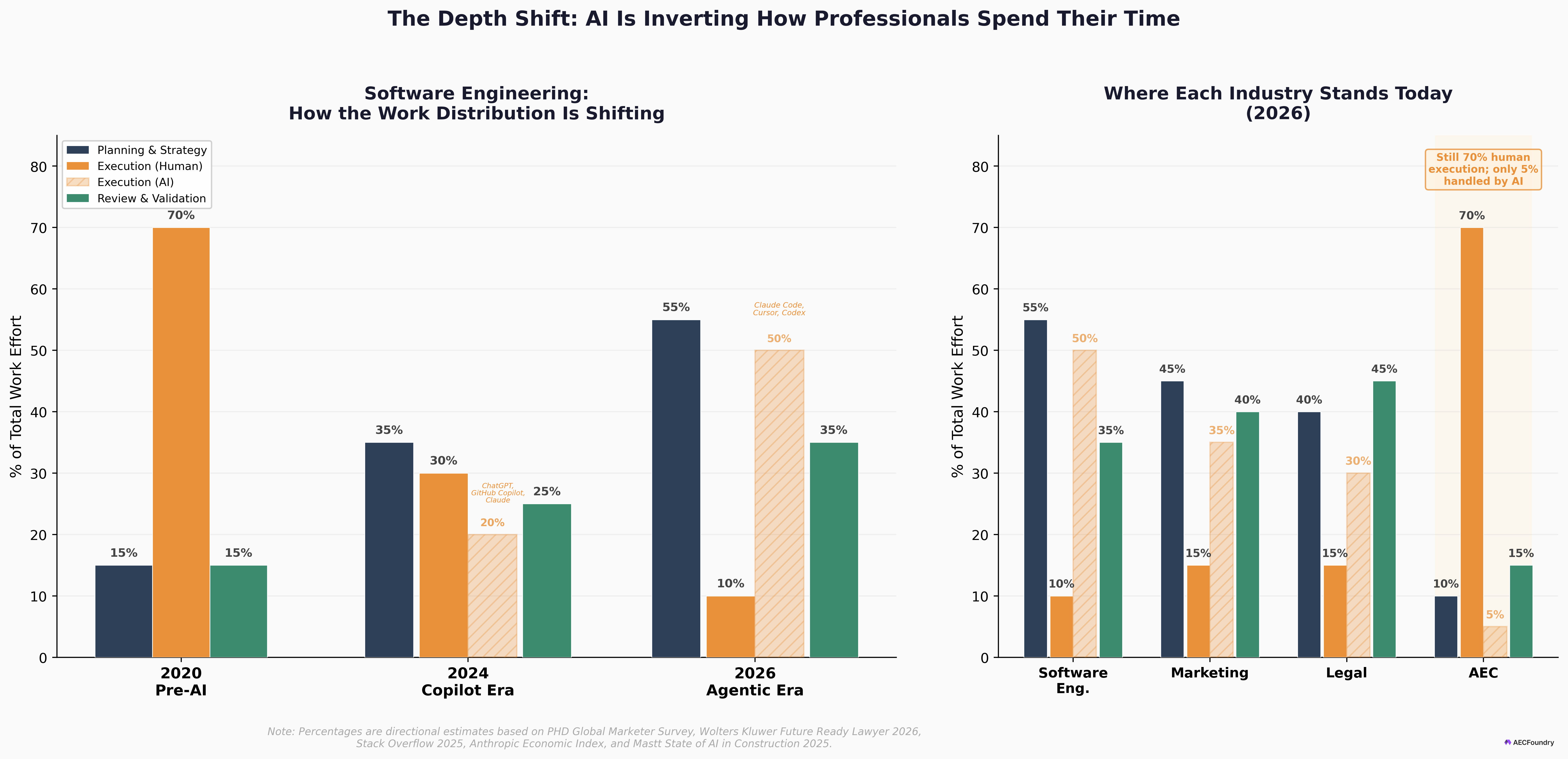

A software engineer at a mid-size firm still has the same title they had three years ago. But their day looks nothing like it used to. They spend most of their time reviewing AI-generated code, designing system architecture, and making judgment calls about what to ship — not typing syntax. At Anthropic, the company behind Claude, roughly 90% of code is now AI-generated. Boris Cherny, the creator of Claude Code, hasn’t written a single line by hand in months — shipping 10 to 30 pull requests a day, every one of them produced by AI. The human role hasn’t disappeared. It’s shifted from writing to directing.

A lawyer at a litigation firm describes an “80/20 reversal” — shifting from spending 80% of their time gathering information to spending 80% analyzing it. Harvey AI’s 2025 adoption study found that power users save nearly 37 hours per month, with junior lawyers handling strategic work earlier in their careers than ever before. A marketing director who used to hand-write every campaign asset now orchestrates an AI-assisted content pipeline, with 75% of their team’s effort redirected from production to strategy.

These aren’t predictions. These are current job descriptions. Across software, law, marketing, and finance, AI has already inverted what professionals actually do all day. The Anthropic Economic Index confirms the pattern: augmentation (52% of AI conversations) has overtaken pure automation (45%) as the dominant way people use AI at work. People aren’t handing off their jobs — they’re changing what those jobs involve.

Now look at construction. The project manager is still spending 35% of their time — over 14 hours a week — on non-productive documentation tasks, from searching for project data to resolving conflicts caused by fragmented information (Autodesk/FMI). The site engineer is still manually cross-referencing PDFs. Architects and estimators are still counting door symbols on floor plans by hand.

The inversion that has already reshaped every other knowledge profession is knocking on construction’s door. But the industry hasn’t opened it yet.

The Inversion: From Producing to Orchestrating

The shift has a name now: the planning-and-review paradigm. Where professionals once spent the vast majority of their time on execution — writing, drafting, processing, calculating — they now spend it on planning, judgment, and review. The World Economic Forum’s 2025 Future of Jobs Report projects that by 2030, work tasks will be nearly evenly divided between human-only, machine-only, and hybrid collaboration — a radical departure from today’s 47% human-performed baseline.

The numbers bear this out. The St. Louis Federal Reserve’s November 2025 data shows AI adoption at work has reached 37.4% of U.S. workers, up from 33% earlier that year, with the share of work hours spent using generative AI climbing to 5.7%. And it’s not just about speed. Anthropic’s internal study of 132 engineers showed that as AI handles more routine execution, the average task complexity tackled by humans rose from 3.2 to 3.8 out of 5 over six months. Engineers aren’t doing less. They’re doing harder, more strategic work.

But here’s the honest complication. A 2026 study published in Harvard Business Review found that AI doesn’t reduce work — it intensifies it. Researchers at UC Berkeley tracked a 200-person tech company and found that employees work faster but take on broader scope, with 62% of associates reporting burnout. The planning-and-review paradigm may be more demanding, not less. Which is exactly why doing it without structure is a recipe for failure.

Construction’s $1.6 Trillion Version of This Problem

The AEC industry represents both the greatest untapped potential for the planning-and-review paradigm and the widest gap between knowing about it and actually doing it.

The scope of document-heavy work in construction is enormous. RFIs are just one example: a typical project generates roughly 10 RFIs per $1 million spent, each costing over $1,000 in administration and taking 10–14 days to process. But RFIs are the tip of the iceberg. Submittals, safety compliance documentation, specification cross-referencing, quantity takeoffs, daily progress reports, inspection checklists — the AEC industry runs on document workflows that span dozens of disciplines and hundreds of formats. Many of these processes are repetitive, high-volume, and already within reach of current AI capabilities.

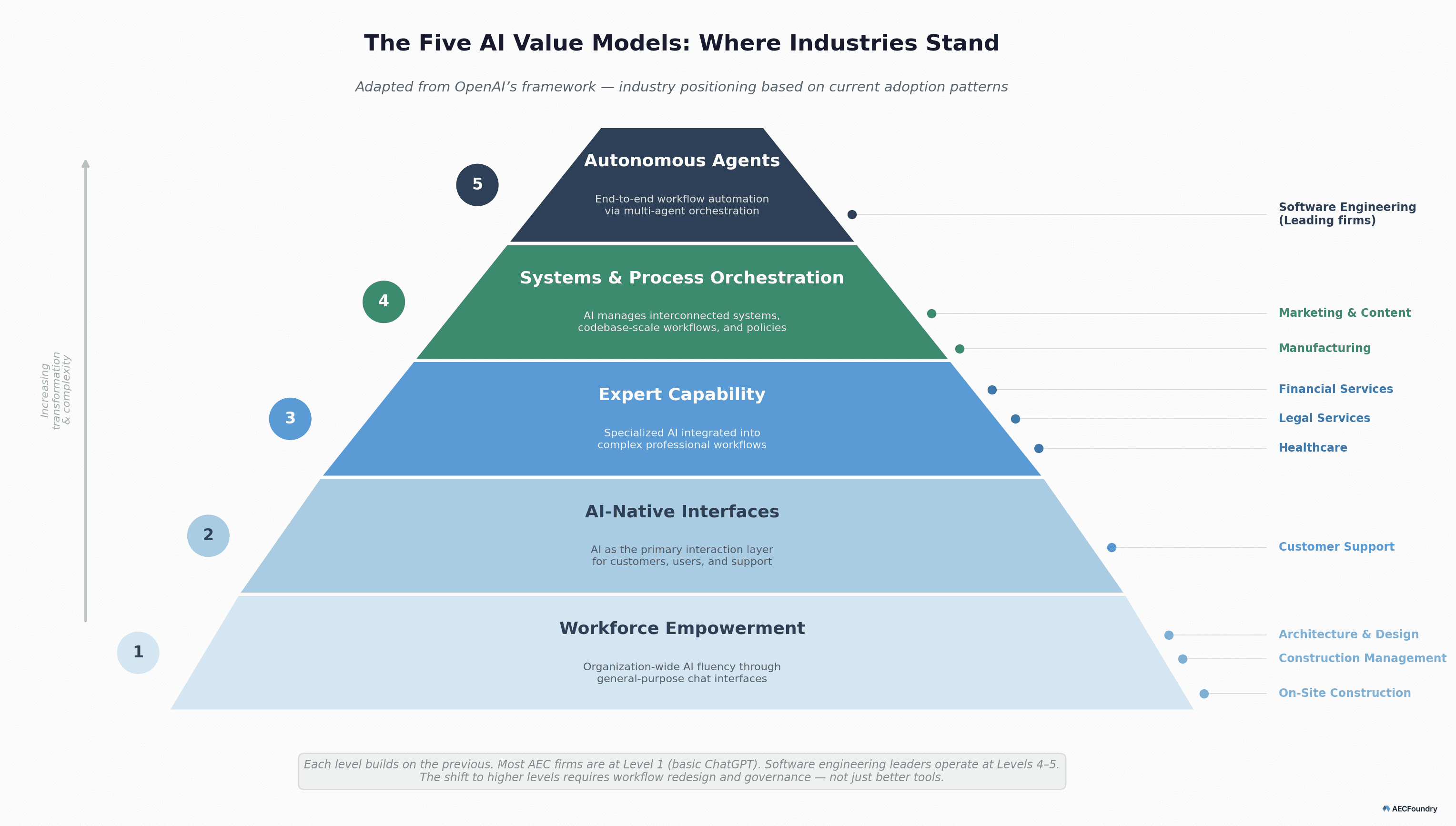

The Dodge Construction Network’s December 2025 survey found that 87% of contractors believe AI will meaningfully transform their business. But only 19% have adapted workflows, and fewer than 15% use AI-enhanced functions across the 24 project and company management areas studied. Anthropic’s labor market impact research visualizes this gap precisely: when they measure actual AI usage by occupation — not theoretical capability, but what people are genuinely doing with AI — construction shows one of the smallest footprints of any sector.

This is important to state plainly: we are not claiming that AI should automate all of AEC. Far from it. Engineering calculations carry life-safety responsibility. Complex spatial reasoning on architectural drawings — understanding what a floor plan means, not just what it contains — still exceeds current AI capability. Our own work on AECV-bench shows that even the best multimodal models achieve only about 51% accuracy on counting architectural symbols on floor plans. As the Institution of Civil Engineers has argued, engineering judgment must remain a fundamentally human competency.

But that’s exactly the point. AEC has a gigantic number of tasks across its disciplines. Not all of them can be done well with AI right now — and we’ll come to that distinction in the next section. But a substantial number of document-heavy workflows — RFI processing, submittal tracking, specification compliance checking, automated reporting — are already on the verge of meaningful acceleration. The technology is there. The implementation isn’t. As OpenAI’s five-level AI value model describes, most of the AEC industry is still at stage one: using ChatGPT for basic tasks like drafting emails and answering general queries. The ladder has five rungs, and the industry is standing on the first one.

The Confidence Principle: When AI Leads and When You Do

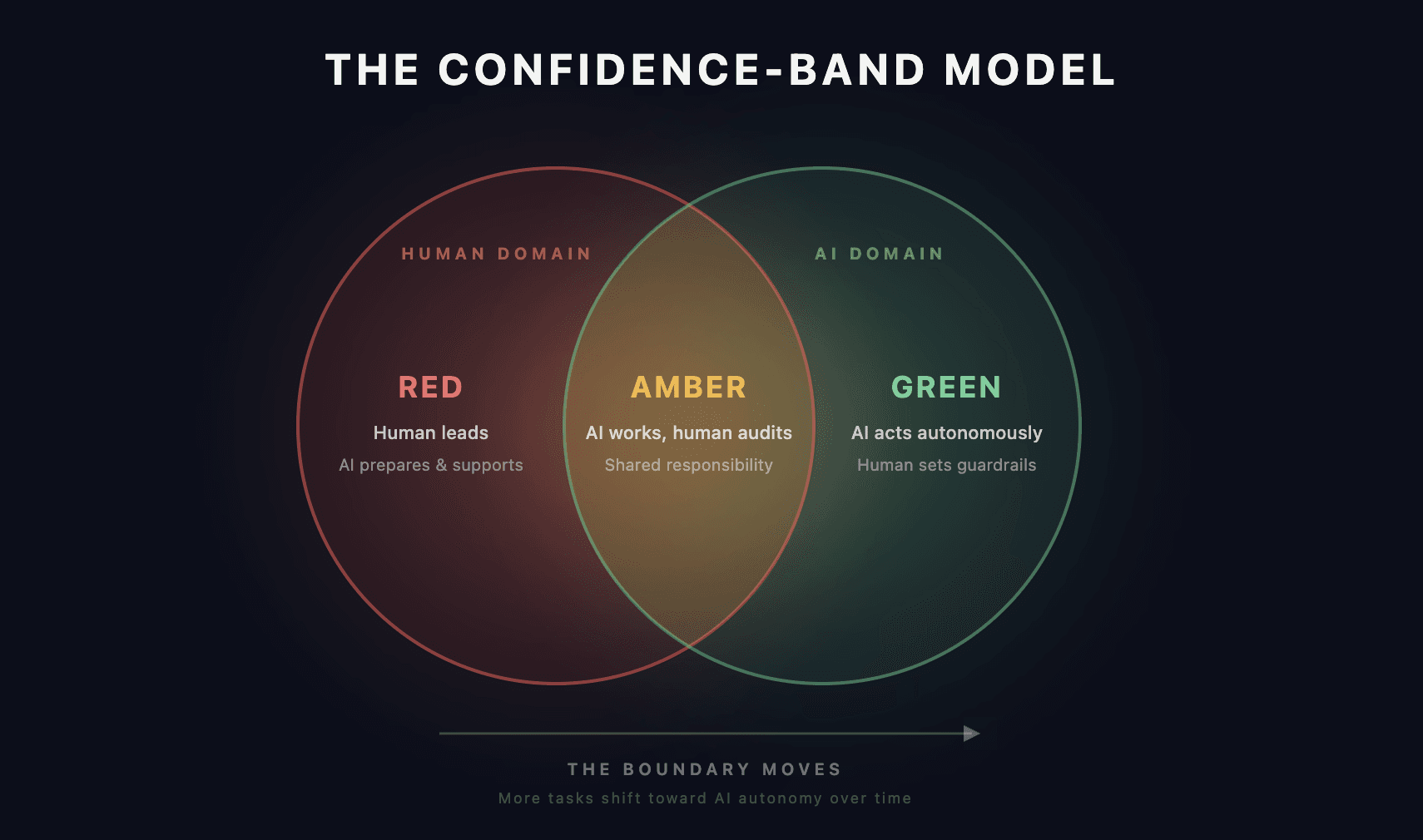

If AEC has hundreds of tasks, and only some of them are ready for AI, the practical question becomes: how do you decide which ones? The most useful framework we’ve found is the confidence-band model — a three-zone approach deployed across industries from healthcare triage to financial services.

Green zone (high confidence): AI acts autonomously. Routine document classification, standard data entry, matching certifications to worker profiles. The human reviews periodically but doesn’t gate every action. This is where the 14+ hours per week of administrative waste gets eliminated.

Amber zone (medium confidence): AI works, human audits. Specification cross-referencing, submittal log generation, preliminary quantity takeoffs. The AI does the heavy processing but flags anything ambiguous for human review. This is where most AEC AI work should live today.

Red zone (low confidence): Human leads, AI assists. Complex spatial reasoning on floor plans, resolving contradictory project specifications, safety-critical engineering calculations. The AI assembles the evidence pack, but the human expert makes the call.

The NHS’s Smart Triage system, developed by Rapid Health, offers a real-world proof point: AI autonomously allocates 91% of patient appointments across five urgency tiers, with wait times dropping 73%, from 11 to 3 days. The system works precisely because the zones are clear — AI handles routine triage, humans handle complex clinical judgment.

The key insight, articulated well in Feng et al.’s 2025 research on AI autonomy levels, is that autonomy is a design decision, separate from capability. A highly capable AI can — and sometimes should — be deliberately constrained to the amber zone for high-stakes tasks. As Anthropic’s own research on measuring agent autonomy argues, the metric that matters most isn’t accuracy on the primary task — it’s what the system does at the boundary of failure.

Critically, the field is dynamic. What AI can handle is expanding almost every month. New models appear, existing capabilities deepen, and tasks that sat firmly in the red zone a year ago are migrating into amber. In software engineering and marketing — fields where AI adoption is more mature — the depth of what AI can do autonomously keeps growing. But the same expansion is happening in breadth: industries like construction, where AI has so far only touched basic tasks, are beginning to see AI reach into core document workflows. The confidence zones are not fixed categories. They’re a moving boundary that must be constantly reassessed.

What doesn’t change, no matter how capable the models become, is the paradigm itself: human intent and human evaluation always stay in place. The planning-and-review framework expands to cover more tasks, but the human role — defining the goal, assessing the output, making the judgment call — remains structurally necessary.

What It Looks Like When You Actually Start

Frameworks are easy to agree with and hard to implement. The gap between understanding the confidence-band model and actually rethinking how your team spends its day is where most firms stall.

At AECFoundry, we’ve been living inside this shift for two years. We use Claude Code and OpenAI Codex for engineering AI workflows — building document processing pipelines, running evaluations against our AECV-bench dataset, scaffolding data infrastructure, software architecture, etc. And we use Cowork for operational orchestration — marketing, business development, project management, financial and admin tasks. In both cases, AI handles the heavy processing while humans retain full control over judgment, strategy, and quality decisions. We will touch more of it in the upcoming posts.

It took deliberate design, not just tool adoption. We had to figure out, for our own work, which tasks belong in the green zone, which need human audit, and which require full human control. That assessment isn’t a one-time exercise — it’s something we revisit as models improve. The result isn’t that we work less. We still work the same hours, sometimes more. But we work on fundamentally different tasks: more time on planning and validating, which leads to better strategy and higher-quality output. Our work shifted from production to orchestration, and the quality of what we ship has measurably improved as a result.

We’ve shared how we’re navigating this shift conceptually and we will share more details very soon. But every firm’s reality is different. The project types are different. The document ecosystems are different. The team structures are different.

So here’s the question we’d genuinely like to explore: How is your team starting to draw the line between what AI handles and what stays with humans? What’s working? Where are you getting stuck? We’d like to hear about it — in the comments, in a message, in a conversation.

Because the evidence from every other knowledge profession is clear. AI won’t replace AEC professionals. But the firms that learn to orchestrate AI will outbuild those still doing everything by hand — and the gap will only widen from here.

________________________________________________________________________________________________

AECFoundry helps architecture, engineering, and construction firms navigate this transition — from identifying which workflows belong in the green, amber, and red zones to designing the governance frameworks that make AI adoption sustainable. We've done it for our own practice, we've done it for enterprise clients across the AEC value chain, and we're ready to do it for yours. If you're thinking about where AI fits in your operations and want a partner who speaks both AEC and AI fluently, book a call with our team.