AI-Native Operations: How We Automate Our Company

Technology

5

min read

Think of a company as a platform. Most organisations bolt on AI like an aftermarket plugin — functional, attached, but not really integrated. An AI chatbot here, a copilot there. The tool works, but nothing else in the organisation feels it.

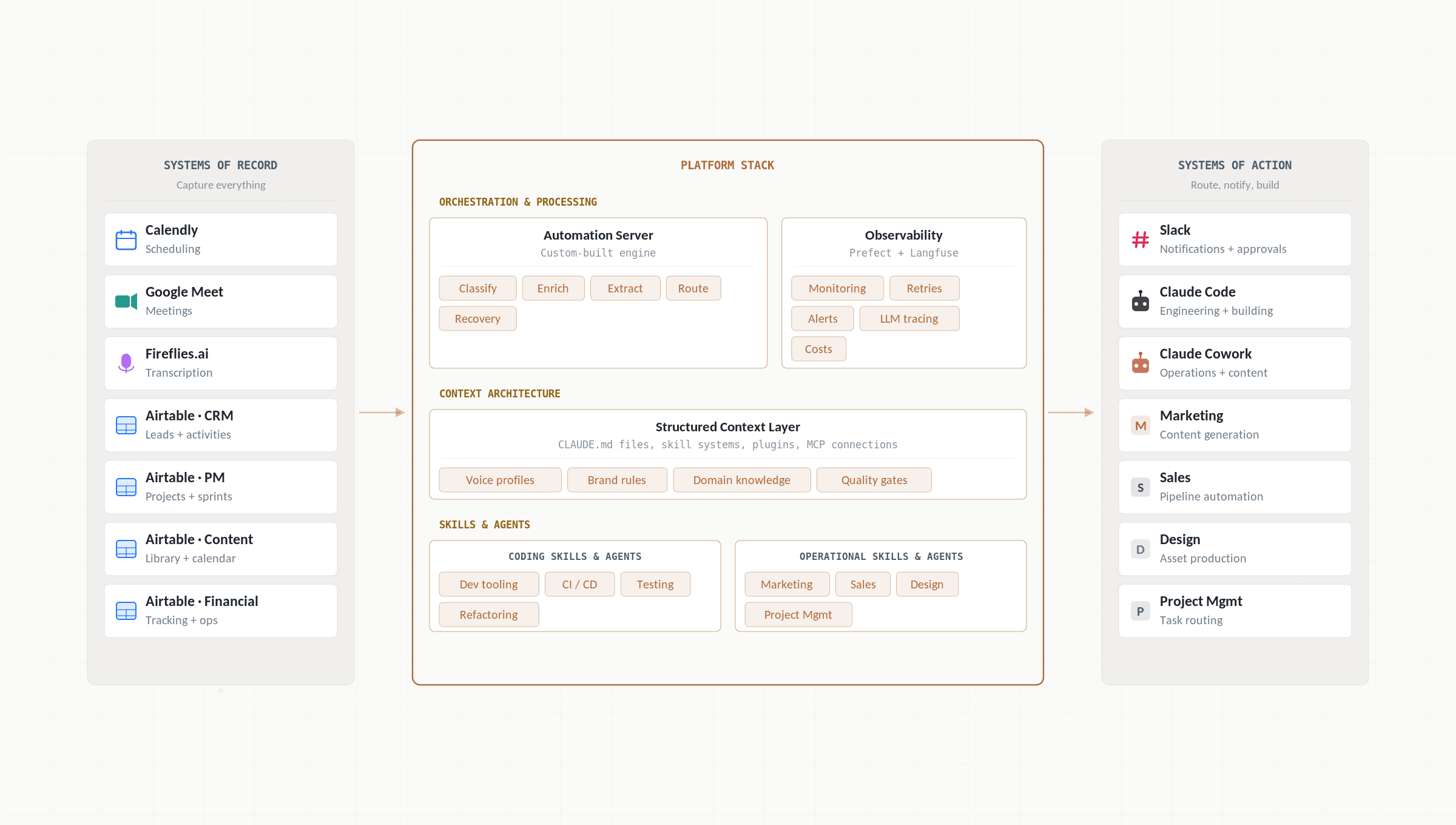

AI-native is different. It means building the company as an integrated platform from day one. We build foundational capabilities — an automation server, data pipelines, a context architecture — and deploy compounding solutions on top: tools and automations that serve every function across the business. Every meeting, every sales call, every line of code, every piece of content — captured, processed, and connected automatically. Inputs flow in (calls get recorded), the platform processes them (AI models classify, enrich, and generate), and outputs get routed to the right tools (code gets written, tasks get created, content gets published). Nothing operates in isolation. Remove a foundation layer, and everything built on top of it stops — even though each tool works fine on its own.

That’s how AECFoundry runs. Not because we sell AI solutions and want to sound impressive. Because we need to move fast, deliver reliably, and maintain quality — with a small team, serving clients whose problems are large. The infrastructure we describe here is the foundation of every engagement we deliver. This internal tooling not only empowers us to exceed the typical output of our headcount but also acts as the very groundwork for the results we provide to our clients. In our previous post on AI Platform Engineering, we outlined how this discipline works at enterprise scale. This post shows what it looks like in practice — inside our own company, where we’ve been running on this approach for a long time.

The Infrastructure: How Everything Connects

Here’s what our ecosystem actually looks like. Every platform exists for a reason. None of them work alone.

Video calls happen on Google Meet. Fireflies.ai records and transcribes them automatically — every word, every meeting, no exceptions. Those transcripts hit our automation server, where AI classifies the meeting (was that a lead call? an internal sync? a partner discussion?) and processes it differently depending on the type. For lead calls, the CRM gets updated. For internal meetings, structured notes land in our task tracker. For all of them, action items get extracted and proposed for approval.

Meanwhile, when someone books a call through Calendly, a different pipeline fires. Before the meeting even happens, AI has already researched the prospect — company background, industry, recent news, talking points, service fit. By the time we pick up the phone, we have a brief we didn’t write.

All of this flows into Airtable — four interconnected bases that serve as our operational backbone: CRM, project management, content production, and financials. Slack handles approvals and notifications. Claude Code writes our software. Claude Cowork generates and updates operational documentation.

The value lies in the methodology, not any specific piece of software. While we use these particular tools to power our ecosystem, they are simply examples of what works for our current scale. Our approach is platform-agnostic; the core principle is about how systems are wired together into a unified platform — a foundation layer that compounds over time — rather than the specific brand of tools chosen to implement it.

Buy vs. Build: Every Decision Is Deliberate

We get asked: why these specific tools? The answer is a principle we apply to our own stack and recommend to our clients. The rule: buy commodity, build differentiation. Commodity tools — transcription, scheduling, databases — plug into the platform as interchangeable components. The differentiated layer — the automation server, the context architecture, the quality gates that wire everything together — that’s what we build ourselves. The platform is the competitive advantage, not any individual tool. This is also how we advise our clients.

Decision | Tool | Why |

Buy | Fireflies.ai | Transcription is a solved problem. No reason to rebuild it. |

Buy | Airtable | Flexible operational database with a strong API. Good enough customisation availability while outsourcing infrastructural complexity. |

Buy | Slack | Team communication is commodity infrastructure. |

Buy | Google Meet | Video conferencing. We need it to work, not to be unique. |

Build | Automation Server | Off-the-shelf tools (Make, Zapier, n8n) don't allow the desired speed of development and level of customisation. |

Build | Context Architecture | CLAUDE.md files, skill systems, plugins, MCP connections — the structured context that makes AI effective and aligned with the way we work. |

Three Platform Modes of Automation

Our platform provides three distinct automation modes — foundational capabilities that any workflow can compose from. Knowing which mode to use is half the design challenge.

Reactive (event-driven): These run without anyone asking. A prospect books a call — AI enriches the lead. A meeting ends — AI processes the transcript, extracts tasks, posts them for approval. A webhook fires — the pipeline handles it. By the time a human needs to act, the context is already assembled. This is the platform’s event layer at work: signals travel, processing happens, and the system responds before anyone needs to act.

Proactive (scheduled): These run on a clock. For example, every morning at 7 AM, AI queries today’s tasks, groups them by assignee, and posts a formatted list to Slack with direct links to the task with its full description in our PM database. The team starts the day knowing exactly what to produce. No standup needed. No one is confused — the system just runs.

On-demand (human-invoked): These run when we call on them. Writing a blog post. Planning a marketing campaign. Building a feature. Creating a scope of work for a potential client. AI as a skilled colleague you bring in when the work requires it — then it operates within a structured context, following established patterns, producing outputs that meet defined quality standards.

Think of it like a cycling echelon. The riders rotate who leads depending on conditions. On flat ground (routine operations), the reactive and proactive systems take the front — drafting for the humans behind them. When the road tilts uphill (complex decisions, creative direction, client relationships), humans move to the front. The formation adapts. But the group always moves faster than any individual rider could alone. That’s the point of the whole structure.

Quality Gates and the Human in the Loop

AI-native doesn’t mean trusting AI blindly. Every automated output passes through quality gates: citation verification (every URL fetched and checked), voice consistency scoring, brand alignment checks, deduplication across campaign history, code review and testing before merge, and human-in-the-loop approvals at every critical decision point.

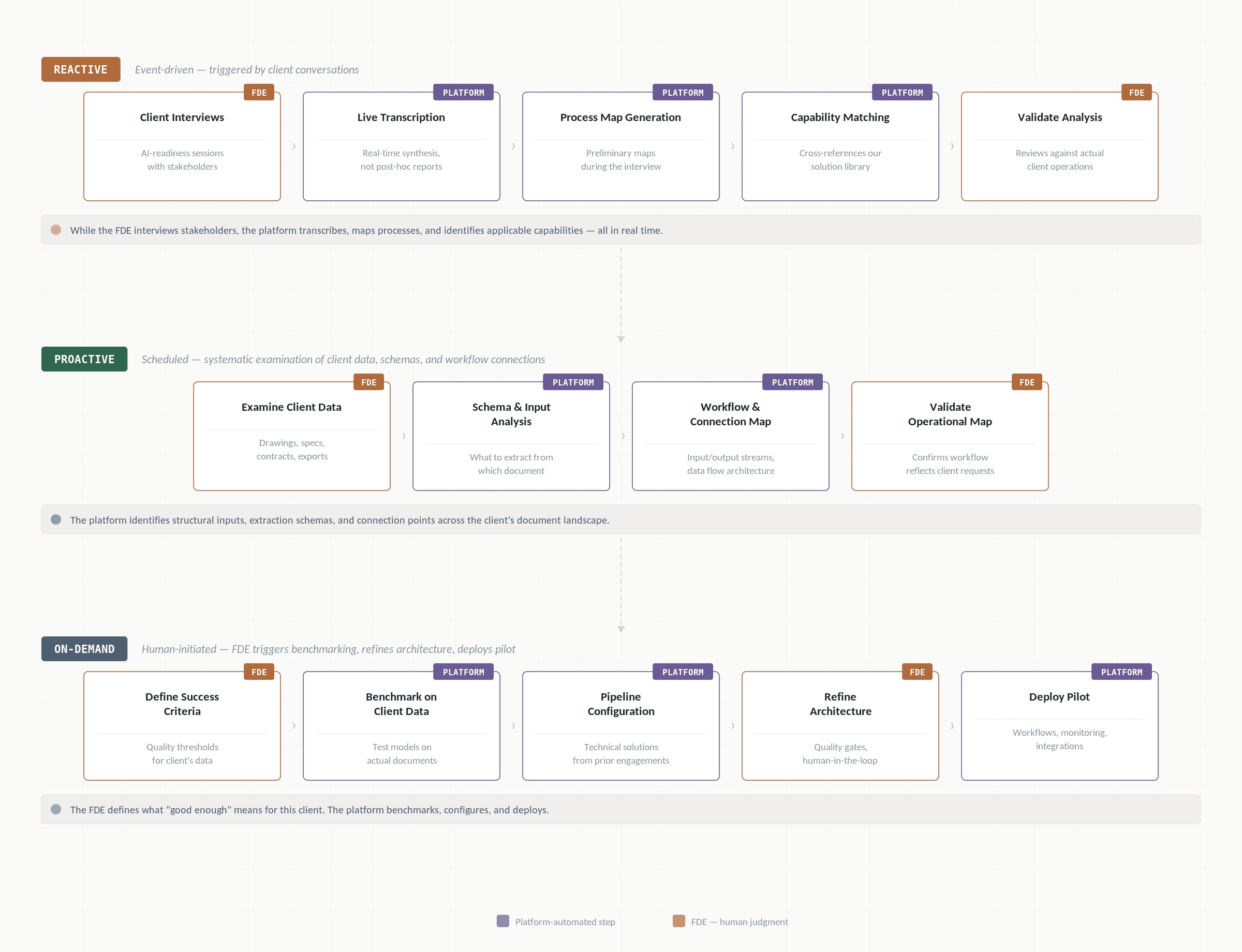

Consider how this plays out in a real client engagement. A construction enterprise approaches us to automate their document processing. The same three platform modes that run our internal operations — reactive, proactive, and on-demand — structure how we deliver.

Reactive — discovery through client conversations. Our Forward-Deployed Engineer (FDE) — the cross-functional role we described in our platform engineering post — runs structured AI-readiness interviews with stakeholders across the client’s organisation. While the FDE asks the questions, the platform works in parallel: transcribing every session in real time, generating preliminary process maps during the interview itself, and cross-referencing what it hears against our existing solution library to identify which capabilities could apply to the client’s described workflows. The FDE then validates this analysis against actual client operations. This step separates a working engagement from an expensive experiment — and it happens before a single line of code is written.

Proactive — systematic data examination. The FDE examines the client’s actual documents: drawings, specifications, contracts, system exports. The platform identifies the structural inputs and schemas that need to be extracted from each document type to align with the desired workflow — what format transformations are required, what is machine-readable versus what requires interpretation. In parallel, the platform maps end-to-end workflows and establishes input–output connection points: how data enters the client’s processes, where it transforms, and how it exits. The result is a complete operational map where the desired workflow reflects the client’s actual requests — exactly what needs to be extracted from which document, how this data is being transformed for the purposes of the required workflow (embedded into the knowledge base, cross-referenced against compliance requirements, etc), how data flows in and out, and what connections with existing software tie it all together.

On-demand — benchmarking and deployment. The FDE defines success criteria: human judgment on what “good enough” means for this client’s specific accuracy requirements and quality thresholds. The platform then benchmarks — our coding assistants, AI skills, and agents infrastructure are used to test how well our current approach works on this particular problem, which models perform best on this client’s actual documents, quantifying extraction accuracy and error rates against those criteria. The platform suggests technical solutions informed by prior engagements. The FDE refines for client context — where to place review checkpoints, which decisions stay with humans, and how the architecture fits the client’s existing systems. Then deployment: the platform assembles the automation workflows, monitoring hooks, and integration connectors. The FDE reviews, approves, and ships — production-ready systems after a few weeks since the first call with a client.

Three modes, one internal platform, and the human effort front-loads while platform effort compounds. The FDE invests the most judgment early — in discovery and mapping — and the platform takes an increasing share of the execution as the engagement matures.

In our previous posts, we talked about how the real shift isn’t about AI replacing work — it’s about humans investing more effort in planning and reviewing rather than executing, and about building platform-level capabilities that make this sustainable at scale. This is where both principles become concrete.

Here’s the ratio we’ve designed around: AI handles roughly 90% of the volume work. Humans review the 10% where judgment matters most. The automation server processes every meeting transcript end to end. A human only touches the output at the approval step — “Are these the right tasks? Is this lead summary accurate?” The effort is concentrated at the point of highest leverage.

The result: lower time per task, higher quality output. Not because AI is perfect. Because humans are focused on the decisions that actually require human judgment, and nothing else.

The Multiplier: A Platform, Not Just Tools

Everything described above compounds into a single outcome: a small team delivering what would traditionally require entire departments.

Plenty of teams lose hours every week because meeting notes don’t get written, tasks don’t get captured, and follow-ups fall through cracks. We solved that with automation. We don’t spend time managing our CRM — we have a CRM that updates itself after every call. The infrastructure handles the operational load that would otherwise consume the time we spend building.

Here’s the important distinction: we are not our clients. Our clients are multi-billion-dollar enterprises with very different problems, scales, and requirements. The specific solutions we built for ourselves — our automation server, our marketing pipeline, our context architecture — are designed for a small, fast-moving AI company. Yours will look different.

What transfers is the approach. Understand where AI handles volume. Identify where humans add judgment. Design your systems around that boundary. The specific tools differ. The thinking doesn’t.

What makes AECFoundry competitive isn’t headcount. It’s the methodology. And we practice what we preach. When we tell a client “AI can transform your operations,” it isn’t a pitch. It’s a description of how we operate our own business. The methodology we bring to engagements is the same one that runs our company. That’s the credibility signal.

The Foundation, and What Comes Next

This is how we became faster and more efficient without sacrificing quality. AI handles volume. Humans handle judgment. The combination produces better outcomes in less time than either could alone.

The key isn’t just using AI. It’s knowing exactly where the human in the loop should be. Too much human involvement and you lose speed. Too little and you lose the control and the quality. Getting that boundary right is the real skill — and it’s what this entire infrastructure is designed around.

What we’ve described here is the foundation: the why and the what. The specific concepts, patterns, and frameworks — the practical material that can help other companies and teams start building something like this themselves. This is the starting point. The playbook is coming.

The firms that will lead the next decade of AEC are not the ones buying the most AI tools. They are the ones building the platforms — the foundational capability layers that turn institutional knowledge into scalable, compounding operational advantage. If your organization is ready to move from AI experiments to AI operations, that is a conversation we should have.